Rationale

How do we validate the validators? One way is to compare the output from multiple competing validators on the same set of sample PDF files. To make this a mechanical process, the validators need to speak the same language: there needs to be a common reporting format.

Proposal

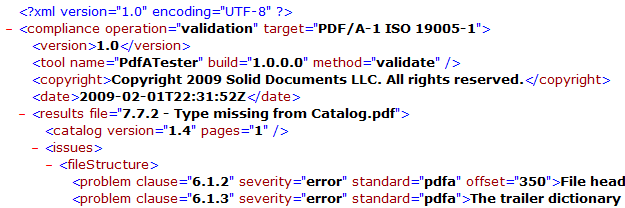

What we propose is an open standard for reporting for PDF validators. Our format is a simple XML file which isolates compliance problems by file. Problems are categorized by the clause of the ISO specification which they violate.

The screenshot above shows mainly the header area of the report. All information in the header is informative and is not required when comparing the results of two validators.

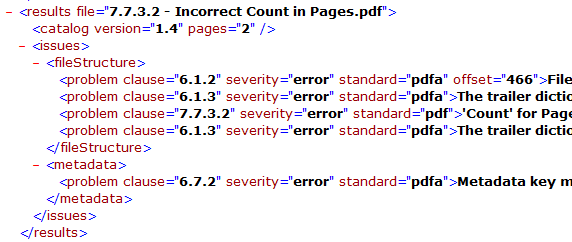

This second screenshot illustrates the elements and attributes of "issues" in the "results" for a single PDF file. The results can also include "info" and "category" elements which include information from the Info and Catalog (Catalog.Version and Catalog.Pages.Count) in the PDF file. (In the case where the same property appears in both Info and Metadata, the value in Metadata will take precedence)

Notice in this sample how the PDF file violates both PDF and PDF/A standards. This is why we have the "standard" attribute for each "problem" to allow us to qualify which ISO specification the "clause" refers to. While there are multiple informative fields like "offset", "page", "traversalPath" and the user readable error message, the only fields needed for comparison of results from multiple validators are: "clause", "category", "severity" and "standard".

Clause

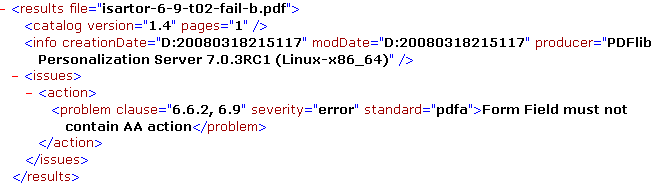

As already mentioned, "clause" refers to the clause number from the ISO standard that was violated. See "standard" below. Be as granular as possible. Comparison software may reduce granularity, truncating sub-clauses from the right, to attempt to find a match. For some problems we may want to mention multiple clauses. This is done using a comma delimited list of clause numbers. These clauses must all come from the same standard.

Standard

The "standard" attribute identifies the ISO specification for the "clause" for each problem. Currently valid values are "pdf" for ISO 32000-1, "pdfa" for ISO 19005-1 and "xmp" for XMP-specific issues.

Category

The "category" is useful for grouping results and presenting in a human readable form. It is ignored by software that compares results. Suggested categories include: fileStructure, xObjects, graphicStateProperties, contents, catalog, metadata, fonts, annotations, actions. More will be added in future iterations.

Severity

We currently have 4 levels of severity:

- warning: informative. Not a violation of the specification but probably a violation of best practices.

- error: specification violation that can theoretically be fixed with no adverse effects on the PDF file (lossless fix)

- severeError: specification violation that can theoretically be fixed but with side effects like a change in appearance (lossy fix)

- fatalError: specification violation that cannot be fixed

Some cases of "fatalError" are subjective and one could argue that there are ways to fix them. We favor a conservative approach where "cannot be fixed" means that it cannot be fixed in a deterministic manner. In other words, a 3rd party cannot reliably predict what the fix would be.

Downloads

Samples

This ZIP file contains some sample XML reports. Some of these samples illustrate what happens when two different standards, like ISO 19005-1 and ISO 32000-1, are violated in a single PDF file.

Samples: validationreportsamples.zip

Working Example

As of March 2009, the free online PDF/A Validation service at www.validatepdfa.com is now generating reports using the Open Standard for PDF Compliance.

Isartor Truth

We have manually crafted this XML compliance report using the Isartor Test Suite documentation as our guide. Comparing this XML report to the output from a PDF/A Validator allows us to quickly and mechanically find discrepancies. This ZIP file contains a "truth" XML report for the Isartor PDF/A test suite and CompareReports.exe, a small Windows command line utility used to compare two XML compliance reports.

Feedback and questions welcome:

Isartor Truth: isartortruth.zip (1.0.0.0 - version will be incremented when updated)

|